The TOC core insights are focused on improving the current business. TOC contributed a lot to the first three parts of SWOT, strengths, weaknesses and opportunities. What is left is to contribute to early identification and then developing the best way to deal with threats. Handling threats is not so much about taking new initiatives to achieve more and more success. It is about preventing the damage caused by unanticipated changes or events. Threats could come from inside the organization, like a major flaw in one of the company’s products, or from outside, like the emergence of a disrupting technology. TOC definitely has the tools to develop the processes for identifying emerging threats and coming with the right way to deal with them.

Last time I wrote about identifying threats was in 2015, but time brings new thoughts and new ways to express both the problem and the direction of solution. The importance of the topic hardly needs any explanation; however it is still not a big enough topic for management. Risk management covers only part of the potential threats, usually just for very big proposed moves. My conclusion is that managers ignore problematic issues when they don’t see a clear solution.

There are few environments where considerable efforts are given to this topic. Countries, and their army and police, have created special dedicated sub-organizations called ‘intelligence’ to identify well defined security threats. While most organizations use various control mechanisms to face few specific anticipated threats, like alarm systems, basic data protection and accounting methods to spot unexplained money transfers, many other threats are not properly controlled.

The nature of every control mechanism is to identify a threat and either to warn against it or even take automatic steps to neutralize the risk. My definition of ‘control mechanism’ is: “A reactive mechanism to handle uncertainty by monitoring information that points to a threatening situation and taking corrective actions accordingly.

While the topic does not appear in the TOC BOK, some TOC basic insights are relevant for developing the solution for a structured process that deals with identifying the emergence of threats. Another process is required for planning the actions to neutralize the threat, maybe even turn it into an opportunity.

Any such process is much better prepared when the threat is recognized a-priori as probable. For instance, quality assurance of new products should include special checks to prevent launching a new product with a defect, which would force calling back all the sold units. When a product is found to be dangerous the threat is too big to tolerate. In less damaging cases the financial loss, as well as the damage to the future reputation, are still high. Yet, such a threat is still possible to almost any company. Early identification, before the big damage is caused, is of major importance.

The key difficulty in identifying threats is that each threat is usually independent of other threats, so the variety of potential threats is wide. It could be that the same policies and behaviors that have caused an internal threat would also cause more threats. But the timing of each potential emerging threat could be far from each other. For example, distrust between top management and the employees might cause major quality issues leading to lawsuits. It could also cause leak of confidential information and also to high number of people leaving the organization robbing its core capabilities. However, which threat would emerge first is exposed to very high variability.

It is important to distinguish between the need to identify emerging threats and dealing with them and the need to prevent the emergence of threats. Once a threat is identified and dealt with then it’d be highly beneficial to analyze the root cause and find a way to prevent that kind of threats to appear in the future.

External threats are less dependent on the organization own actions, even though it could well be that management ignored early signals that the threat is developing.

Challenge no 1: Early identification of emerging threats

Step 1:

Create a list of categories of anticipated threats.

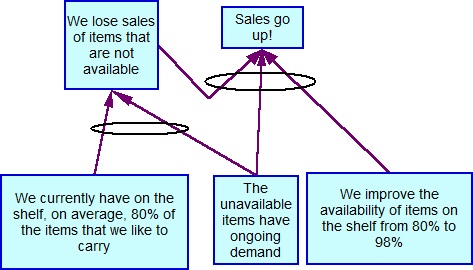

The idea is that every category is characterized by similar signals, which could be deduced by cause-and-effect logic, which can be monitored by a dedicated control mechanism. Buffer Management is such a control mechanism for identifying threats to the perfect delivery to the market.

Another example is identifying ‘hard-to-explain’ money transactions, which might signal illegal or unauthorized financial actions taken by certain employees. Accounting techniques are used to quickly point to such suspicious transactions. An important category of threats is build from temporary failures and losses that together could drive the organization to bankruptcy. Thus, a financial buffer should be maintained, so penetration into the Red-Zone would trigger special care and intense search for bringing cash in.

Other categories should be created including their list of signals. These include quality, employee-moral, and loss of reputation in the market, for instance by too low pace of innovative products and services.

Much less is done today on categories of external threats. The one category of threats that is usually monitored is state of the direct competitors. There are, at least, two other important categories that need constant monitoring: Regulatory and economy moves that might impact specific markets and the emergence of quick rising competition. The latter includes the rise of a disruption technology, the entry of a giant new competitor and a surprising change of taste of the market.

Step 2:

For each category a list of signals to be carefully monitored is built.

Each signal should predict in good enough confidence the emergence of a threat. A signal is any effect that can be spotted in reality that by applying cause-and-effect analysis can be logically connected to the actual emergence of the threat. Such a cause could be another effect caused by the threat or a cause of the threat. A red-order is caused by a local delay, or a combination of several delays, which might cause the delay of the order.

When it comes to external threats my assumption is that signals can be found mainly on the news channels, social networks and on other Internet publications. This makes it hard to identify the right signals out of the ocean of published reports. So, focusing techniques are required to search for signals that anticipate that something is going to change.

Step 3:

Continual search for the signals requires a formal process for a periodical check of signals.

This process has to be defined and implemented, including nominating the responsible people. Buffer Management is better used when the computerized system displays the sorted open orders according to their buffer status to all the relevant people. An alarm system, used to warn from a fire threat or burglary, has to have a very clear and strong sound, making sure everybody is aware of what might happen.

Challenge no 2: handling the emerging threats effectively

The idea behind any control mechanism is that once the flag, based on the signals received, is raised then there is already a certain set of guidelines what actions are required first. When there is an internal threat the urgency to react ASAP is obvious. Suppose there are signals that raise suspicions, but not full proof, that a certain employee has betrayed the trust of the organization. A quick procedure has to be already in place with a well defined line of action to formally investigate the suspicion, not forgetting the presumption of innocence. When the signals lead to a threat of a major defect in a new product then the sales of that product have to be discontinued for a while until the suspicion is proven wrong. When the suspicion is confirmed then a focused analysis has to be carried to decide what else to do.

External threats are tough to identify and even tougher to handle. The search for signals that anticipate the emergence of threats is non-trivial. The evaluation of the emerging threat and the alternative ways to deal with it would grossly benefit from logical cause-and-effect analysis. This is where a more flexible process has to be established.

In previous posts I have already mentioned a possible use of an insight developed by all the Intelligence organizations: the clever distinction between two different processes:

- Collecting relevant data, usually according to clear focusing guidelines.

- Research and analysis of the received data.

Of course, the output of the research and analysis process is given to the decision makers to decide upon the actions. Such a generic structure seems useful for threat control.

Challenge no 3: Facing unanticipated emergence of threats

How can threats we don’t anticipate be controlled?

We probably cannot prevent the first appearance of such a threat. But, the actual damage of the first appearance might be limited. In such a case the key point is to identify the new undesired event as a potential to something much more damaging. In other words, to anticipate based on the first appearance the full amount of the threat.

The title of this article uses the example of a serious external threat called: cyber! Until recently this threat was outside the paradigm of both individuals and organizations. As the surprise of being hit by hackers, creating serious damage, started to become known, the need for a great cyber control has been established. As implied, Threat Control is much wider and bigger than cyber.

An insight that could lead to build the capability of identifying emerging new threats when they are still relatively small is to understand the impact of a ‘surprise’. Being surprised should be treated as a warning signal that we have been exposed to an invalid paradigm that ignores certain possibilities in our reality. The practical way to recognize such a paradigm is by treating surprises as warning signals. This learning exposes both the potential causes for the surprise and to other unanticipated results. I suggest readers to refer back to my post entitled ‘Learning from Surprises’, https://wordpress.com/post/elischragenheim.com/1834

My conclusions are that Threat Control is an absolutely required formal mechanism for any organization. It should be useful to stand on the shoulders of Dr. Goldratt, understanding the thinking tools he provided to us, and use them to build a practical process to make our organizations safer and more successful.