By Eli Schragenheim and Jürgen Kanz

We are told that in order to keep with the quick changes in the world, facing the fiercer competition, manufacturing organizations have to join the fourth industrial revolution called Industry 4.0, which is a very big suite of different new technologies in the field of IT, namely the Internet of Things (IoT), artificial intelligence and robotics.

The slogan of Industry 4.0 claims it is highly desirable to join the revolution before the competitors. Well, we are not sure whether the term ‘revolution’ truly fits the new digital technologies. But, this is truly the smallest issue. The fast pace of the technology should definitely force every top management team of every organization to think clearly what could be the impact of the newest technological development on the organization and its business environment. Thinking clearly is required not only for finding new ways to achieve more of the goal, but also to understand the potential new threats that such developments might bring.

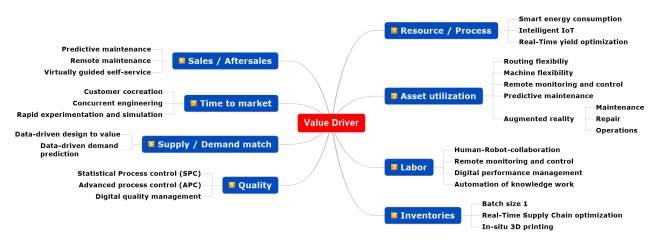

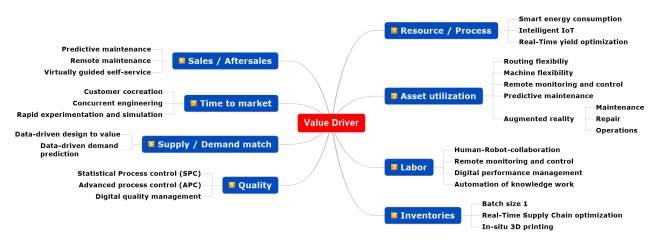

There are two significant threats that new technology might create. First, push management to invest heavily on technology that is still half-baked and its potential value is, if at all, small. Second, cause loss of focus on what brings value and what not. Trying too many ideas, investing money and management attention on too many issues, could end with a big loss, or very low value. Just look on the wide area of claims to bring value:

Image 1: Improvement areas for Industry 4.0, adapted from McKinsey Digital 2015,

“Industry 4.0: How to navigate digitization of the manufacturing sector”

Application of new IT technologies and the connection of known technologies shall lead to the following expected improvements per area:

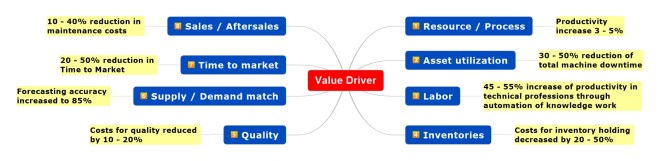

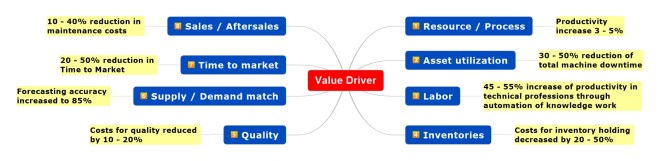

Image 2: Expected improvements, adapted from McKinsey Digital 2015,

“Industry 4.0: How to navigate digitization of the manufacturing sector”

We can recognize a number of good time and cost reductions that will increase the overall productivity, but what are the expectations of top managers? To gain more insight we can look into a survey of “Roland Berger Strategy Consultants” with input from 300 top managers of German industries as an example:

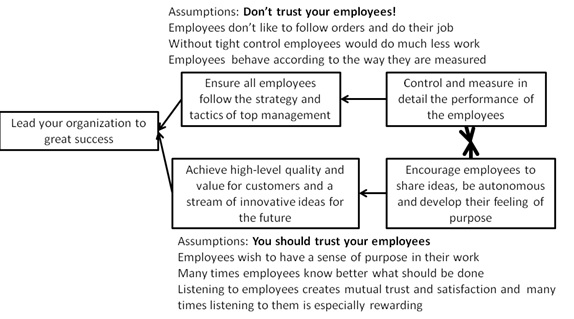

Image 3: Top-Manager expectations, adapted from “Die digitale Transformation der Industrie”, Roland Berger Strategy Consultants & BDI, https://bdi.eu/media/user_upload/Digitale_Transformation.pdf, last download 09/24/18

A big group of executives (43%) target only cost reduction with the help of Industry 4.0, while other managers want to have more sales with new products (32%) or more sales with existing products (10%). The objective to achieve more sales and cost reduction is a wish of 14% of the managers.

We can expect that approximately half of the managers will be satisfied with cost reduction due to improvements in above-mentioned areas, but there is no element in the above images that supports directly gaining more sales of new or existing products.

We assume that on one hand IT related product innovations will push sales with new technological products, like wearables (gps watches, health control, sleep tracker, etc.). On the other hand, there is the wish to cut cost in production, which due to the fierce competition would press the price down and increase sales quantity. Will this trend also increase net profit? The short answer is: it depends; companies need to analyze the full impact on the bottom line very carefully.

The new digital technology can help reducing the “Time to Market”, the time to run a new product development project from idea to market launch incl. customer contribution. One question is by how much? The answer depends a lot on the specific technology of the new products. Another question is whether Industry 4.0 can reduce production lead-time and what this could do to improve sales?

The mentioned improvements in Sales / Aftersales have an impact only on after-sales activities, but are they sufficient to create new sales?

It seems that the main vision of McKinsey and many other big players is limited to cutting operating expenses, which is fine for bringing certain value, but it is NOT a revolution. The tough reality of cutting costs is that it cannot be focused; it is spread over many cost drivers. It requires a lot of management attention and usually brings limited net business value. Question is what if the management attention efforts would have been directed to give higher value to more customers?

We understand that when implementing some of the most relevant Industry 4.0 technology, and when the technological changes are combined with the right management processes, such achievements, like cutting lead times by 20-50%, are not only possible, but should also dramatically improve the general responsiveness to customers and also becoming truly reliable in meeting all commitments.

But, is it sufficient that the technology is installed and used to achieve such results?

And is it enough to reduce the time to market, or cutting the production lead-time, to get better business results?

Significantly improved business results are achieved if and only if at least one of the following two conditions applies:

- Sales would grow either from selling more or from charging more and not losing too many sales because of the price increase.

- Costs are cut in a way that does not harm the delivery performance and the quality from the customer perspective.

The above should be the top objectives of any new move of management, including dwelling on implementing a new technology, like one of the Industry 4.0 elements.

On top of carefully checking how any of Industry 4.0 components could achieve one, or both, of the above conditions, a parallel analysis has to be used to identify the negative branches, the variety of possible harms that might be caused by the new technology.

For instance, the use of any 3D printer is limited by the basic materials that the particular printer can use. If this limitation wasn’t considered at the time when the decision to use such a printer was accepted then it could easily make the use of the “state-of-the-art” technology a farce.

We suggest every manufacturing organization to consider using chosen parts of Industry 4.0 in order to achieve one or both of the above top objectives, bringing higher level of business achievements.

A special effective tool to analyze any specific element of Industry 4.0 is the Six Questions on the Value of New Technology, developed by Dr. Eli Goldratt. The first four questions first appeared in Necessary but Not Sufficient, written by Goldratt, with Schragenheim and Ptak.

Question 1: What is the power of the new technology?

This is a straight-forward question on what the new technology can do, relative to older technologies, and also what it cannot do.

For instance, the ability of IoT to use PLC (programmable logic controllers) sensors on machines to send to a web page precise information about the state of a machine whether it functions properly or there is a problem. Predictions about the next maintenance step based on machine data are useful as well, because the results can help to avoid unexpected machine downtimes and to exploit the constraint.

Question 2: What current limitation or barrier does the new technology eliminate or vastly reduce?

This is a non-trivial question and it is asked from the perspective of the user. In order for a new technology to deliver value there has to be at least one significant current limitation that negatively impacts the user. Overcoming this limitation is the source of value of the new technology. It is self evident that verbalizing clearly the limitation for the user is a key for evaluating the potential value of the new technology.

The leading example of using PLC sensors for providing online information to variety of relevant users reveals that the limitation is the current need to have an operator physically near the machine to get information that could lead to an immediate action. We do not consider the capabilities of the PLC itself as the new technology in this analysis, as this is not truly a “new technology” by now. The new concept is to use the Internet to reach far-away people that can gain, or help others to gain, from the online information on the current state of the machine and the specific batch that is processed.

There are two different uses for such immediate information. The first is when there is a problem in the flow of products, could be technical or bad-quality materials. The other type of information is for checking the likelihood of satisfying an opportunity, like changing over the production line to process a super urgent request or handling an unexpected delay. The operator at the actual location has to get the fresh information and communicate it to certain people who appear in a predefined list. The operator is also expected to update the IT system in an effort to consider the next actions. Overcoming the limitation means the flow of information does not need anybody at the physical location. Depending on the technology the reactive actions could be taken from afar.

Question 3: What are the current usage rules, patterns and behaviors that bypass the limitation?

This question highlights an area that is too often ignored by technology enthusiasts. Assuming the current limitation causes real damages then ways to reduce the negative impact of the limitation are used. For instance, before the cellular phones there were public phones spread all over the big cities to allow people to contact others from where they are. Devices like a beeper or pager were in use to let someone far away know somebody is looking for her. It is critical to clearly verbalize the current means to deal with the limitation because of two different objectives. One is to understand better the net added-value of the new solution provided by the new technology. The other is to understand the current inertia that might impact the users when the new solution would be provided. This side is further explained and analyzed through the next question.

Today the industrial manufacturing landscape is roughly divided in two parts. We have factories with a high automation level for many years. These factories are often process industries using fully automated production lines for chemistry, pharmaceutics, etc. The machines and processes are connected by an independent data network that includes analysis. The monitoring of the production line and related processes takes place in a dedicated control room where the operator has to watch the information on a big screen and when spotting a problem the operator finds the best solution, or calls for help.

In addition we can find also many small and medium sized enterprises (SME) running modern machine tools with powerful controls and integrated sensors. These machines provide already all needed data for analysis, but in most cases, the data is left unused. It is also not very common to store information regarding the problems in the production flow into a database that can feed future analyses on improving the uptime of the production line. Operators can fix mainly small issues. They have to call the external supplier service in case of bigger problems with the machines. Bypassing the problem until it is resolved is typically managed by the operator and or by a cross-functional team. In today’s technology most of the information given to various decision makers is updated up to the previous day. So, urgent requests and unexpected delays might wait to next day to be fully handled.

Question 4: What rules, patterns and behaviors need to be changed to get the benefits of the new technology?

Answering this question requires clearly detailing the optimal use of the new technology to achieve the maximum value. The behavior when the new technology is already operational is, in many cases, different than without that technology. The value of the cellular technology is fully drawn only when the users carry their phone with them all the time. There are many other ramifications of the change imposed by implementing the new technology, like being very careful not to lose our phone. New rules have to be developed to guide us to draw the most value of the new technology.

Industry 4.0 is pushing the idea that all available machine data should be used for monitoring, controlling and analysis. Modern machines can be connected directly with the IoT, while older machines need to install PLC sensors to provide data to the internet. A continuous stream of collected data is stored on a “Cloud” somewhere on a server farm of an external service provider.

The idea of having the PLCs information within immediate reach from everywhere could have added value if and only if people that have not been exposed to the information before would not only get the information but also be able to use it. In order to use immediate online information one has to be aware that it is there. As the true value of the new solution is the speed of getting the true updated information there is a basic need to be able to set an alarm to make the relevant person be aware that something important needs attention immediately. This also means that there is a need to have effective analytics to note when the information becomes critical, and to whom. This requirement should be part of answering question 4. The more general lesson is that exposing the user to huge continuous stream of data from manufacturing, sales and Supply Chain is a problem the new technology has to offer a solution for.

When everything is connected with everything else, not just the internal company, it means direct connection with the external world via the IoT. This move could create an opportunity for new level of business, but its rules and wide ramifications of such connection have to be very carefully examined. For instance OEMs could have full access to all kind of data from every OEM supplier, which should enhance the win-win collaboration between the players. But, this requires all the players to intentionally strive for this kind of collaboration. The technology is just the enabler for the intentions. Creating this kind of transparency is a necessary condition for effective win-win collaborations.

The connectivity of everything is truly beneficial only with the right focus in place, preventing the human managers from being overwhelmed and confused by the ocean of data. This insight of having to maintain the right focus, the most basic general insight of the Theory of Constraints (TOC), is absolutely relevant for evaluating the potential contribution of every Industry 4.0 element, as it is so easy to lose focus and get no value at all.

Question 5: What is the application of the new technology that will enable the above change without causing resistance?

Resistance is usually raised because a proposed change might cause a negative, usually unintended, consequence. The example of every new medical drug for curing an illness also causes negative side effects, sometimes bigger than the cure, carries a wider message that this characteristic is much more general than just for medical drugs.

It is crucial not just to identify all the potential new negatives that the new technology would cause, but also to think hard how to trim them. The transition from film cameras to digital ones raised the negative consequence of having too many pictures taken. During the years some solutions to organize the photos in a more manageable way have appeared. If the thinking on that problem would have started sooner, the added-value would have been much higher. This is a crucial part of the analysis: to give the negatives much thought, in spite of the natural tendency of being happy with the new value.

It is advisable for every IoT idea to be analyzed for its probable negatives. A generic negative of almost all electronic devices is that when they fail to function properly the damage is usually greater than in the previous technologies. This means stricter quality analysis is absolutely required, plus carrying the replacing electronic cards or devices in stock.

Question 6: How to build, capitalize and sustain the business?

This question is a reminder that the value of the new technology, plus all the decisions around it, is part of the global strategy of the company.

How does the above analysis live with the top objective of the organization? Does the plan for extracting value of the new technology provide synergy with the other strategic efforts required for achieving the goal?

So, here in the sixth question the global aspects of the proposition of implementing a specific application of the new technology have to be analyzed. Actually when several applications of new technologies are considered than question 6 should apply to all of them together. Thus, when analyzing the various elements of Industry 4.0, the first step is choosing several for more detailed analysis; the last step in the analysis is evaluating the global strategy and deciding which ones, if any, to implement and what other actions are required to draw the expected value as soon as possible.

The previous questions of the leading example should discover by how much linking the PLC stream of data into the Internet would either increase sales or significantly reduce cost.

Suppose that the company has an active constraint in a specific machine or a whole production-line, and the constraint is frequently stopped due to various problems. In this case having a quick response mechanism, based on fast analysis of the PLC information, and immediately reaching the right people that can instruct the operator how to fix the problem is truly worthwhile. The generated added value, both by keeping the customers unharmed and by superior exploitation of the capacity constraint, is high.

Add to this the decision to use 3D printers to overcome the management limitation in viewing new product designs from the original drawings, as managers might not have the capability of viewing 2D drawing and imaging the finished product. The cost of producing proto-types restricts the number of models for management to judge the design, and the number of alterations is also limited. Using 3D printers eliminates the limitation. After answering the rest of the questions the organization has to consider question 6 for both elements of Industry 4.0 and decide whether together the value is even greater. If we consider the possibility that current proto-types of new products have to compete on the capacity of the constraint, while using the 3D printer bypasses the constraint, we could realize the synergetic added-value of leading to improved product-design that could enhance sales, while the capacity constraint is better exploited.

The overall conclusion has to highlight the sensitivity of the strategic analysis when the issue of Industry 4.0 is seriously considered. The contribution of the six questions could be truly decisive.